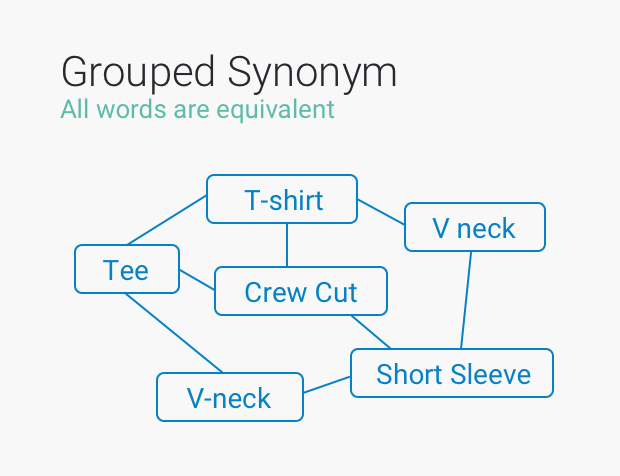

So what can we add to our list of similar contexts? Enrichment on Index Gets Stale These recurring context gaps are why people have always been part of the solution - for thesaurus and dictionary definition management. The question should be: what was the shopper’s intent ? A human retail clerk would pick up the confusion immediately because when the shoppers pointed to their ears, she would understand the context of what they wanted. For example, it turns out that some shoppers inadvertently type “iPods” for “Airpods.” The question shouldn’t be: Is this a synonym (accept or reject)? This method of “supervised learning” gives the wrong impression that closing vocabulary gaps can be just a matter of accepting or declining a suggestion for a rigid synonym relationship. But perhaps a user that has been viewing a couple of DJ speakers already during their session may be looking for a “USB mixer” instead. Merchandisers can be asked if “pants” should be treated as “trousers.” What is the answer if you have both North American and British visitors? Or how about: is mixer a synonym of blender? Surely, in most cases. Synonym Identification - Determined by Intent Distributional similarity represents the relatedness of synonym pairs by the commonality of contexts the words share.īut while this representation is a great start, it isn’t perfect. This “ distributional similarity” is the underpinnings of natural language understanding - and natural language processing. By analyzing the mathematical similarities between those forms, Word2Vec teaches a computer context by highlighting words that are “close to” other similar words. It takes words and produces a vector (vec) of related words - also known as word embedding.

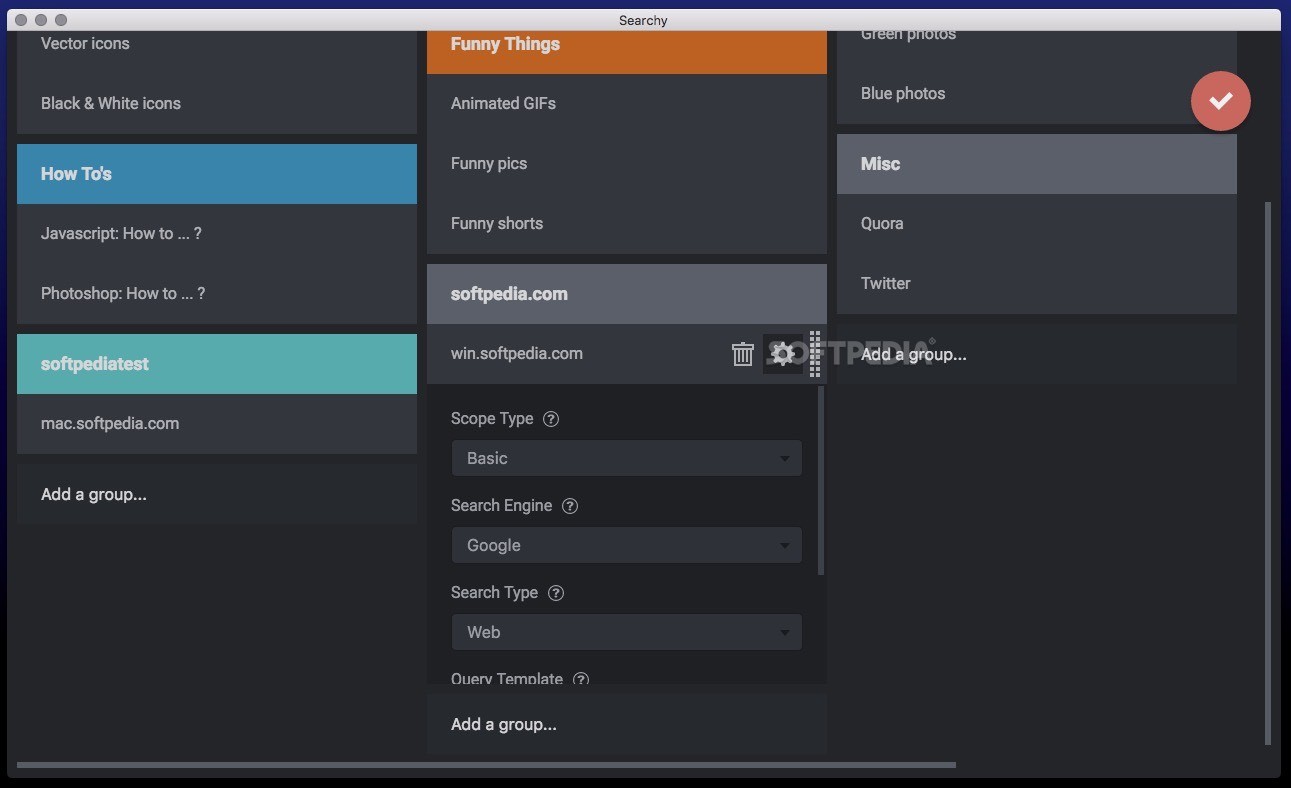

Word2Vec was a computational model written by Tomas Mikolov and his team at Google. It is a good resource - but falls short.Īs an example, it knows that “apple” is a fruit, but doesn’t know it is also a business. The latter is a database of English-language synonyms that contains terms that are semantically grouped. Word2Vec Still Needs Contextįor instance, most vendors will use Word2Vec or WordNet to find related words. However, the computational linguistics typically employed offer overly simplistic solutions to really complex problems. They know it and the industry inside and out. This isn’t due to the fact that your merchandisers and product managers lack outstanding knowledge of your catalog. They offer the same “opportunity” for typo corrections, placing on merchandisers the heavy burden of approving or declining other semantic similarities generated by machine learning.Īs you can imagine, this can result in hours and hours spent (or more accurately, wasted) by your team in judging the value of a given synonym extraction. Automatic Synonym Detection … as Manual Process?Īnd as it turns out, other machine learning vendors don’t just stop at offering up related words for approval. Your merchandisers would rather focus on more creative work than be stuck with dull, repetitive tasks. Unfortunately, this is less of an opportunity and more of a burden that results in added costs and poor employee experience. And maybe you can reject that Airpods and iPods are similar words. After all, you get to affirm (or not) if a given word is synonymous with another - for example, that “iWatch” and “Apple watch” are semantic relations.

This ability to accept or reject results might sound initially appealing. Yet for some reason, after we advanced to the point of letting the machine do that work for us, we still bring in the humans to verify the words we have extracted. After all, who wants to dig around in query logs trying to figure out all the ways people are spelling words or spending their time developing a stop word list? The promise of automatic synonym detection is a key feature request of most search architects.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed